Hello everyone,

I’m Alex (they/them). I’ve participated in two previous DevCamps and am excited to be building things with Holochain! I am currently working on an app that requires geolocation services, and have decided upon a particular approach that I dub ‘s2-wasm’.

I am attempting to compile Google’s S2Geometry library to WASI using Wasmer. This can be used as an anchor generator mixin for Holochain. S2 is a library of C++ classes that divide the globe into trees of cells using a geometric curve wrapped around a sphere. The library isn’t quite suitable for handling exact position or coordinates. Instead, cells are used to approximate regions. S2 depends on OpenSSL for generating 64-bit identifiers for each cell.

You can learn more about the library in this blog post. To visualize how S2 approximates regions, you can also play around with S2’s RegionCoverer class using this tool.

Notably, this library is used for geospacial indexing for apps such as Pokémon GO and Tinder. Tinder’s engineering team wrote an interesting series of articles detailing how S2 helps them manage distributed data storage. (Though Holochain functions independently of geolocation, so the objectives here are different.) [Part 1 | Part 2 | Part 3]

Here’s an overview of how I’m planning to use the lib:

-

When the service initiates, the front-end client gets the device’s coordinates (this doesn’t have to be GPS; it may use WiFi triangulation, etc.) This is passed to the module along with the “discoverability/query” radius.

-

S2 RegionCoverer returns a vector of 64-bit identifiers for the cells in that region. To limit how many cells are generated, the min and max cell level should be set to the same value. (Lower level = bigger cells, higher level = smaller cells) The blockiness of the region isn’t so much a concern as the mitigation of hotspots on the DHT, which can come from having many links to one thing.

-

The user’s profile is anchored to each of the slices in the vector.

-

When a user needs to query an area, they simply get the cells in their region and randomly query them as anchors.

Alice only needs to locate one link to Bob’s profile, as it is known to them after that point.

As a user’s discoverability radius increases, an issue arises where many more cells (and anchors) are created, resulting in hotspots. To resolve this, I propose:

-

Setting upper and lower bounds for a valid radius, and

-

Set bounds for the cell levels, by stepping up their size as the radius increases.

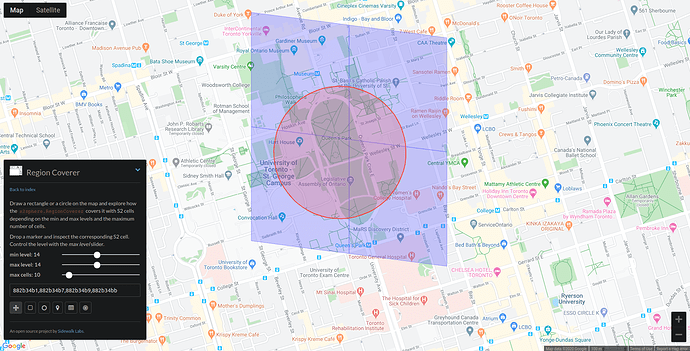

As an example, looking at the University of Toronto campus with the RegionCoverer tool:

Level 14 cells appear to be appropriate for this radius.

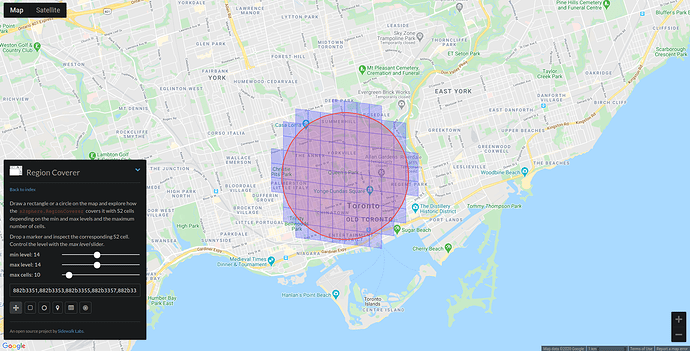

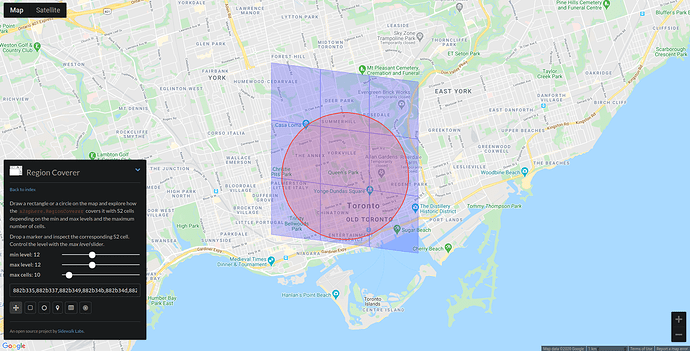

Expanding out, though, level 14 is creating more anchors than we’d like…

Level 12 seems much better:

A user’s profile is anchored to cells at the level based on their preference. When querying, the entire tree of valid cells should be passed back. The client first queries cells on their own level, then moves to other levels. An added benefit of this approach is that the users with similar travel preferences are more likely to find each other first.

Even if the cell level is progressively stepped down, hotspots might still become an issue if many users are linking to a single large anchor. To solve this, a procedural naming convention can be used to produce multiple “dimensions” of cells. If the number of links reaches a certain limit, a new dimension is generated. During a query, nodes should step up dimensions until no further links are returned. This could also be useful for ‘first n people to check in at x location’-type validation.

What about privacy and security?

I’m intending to set user profiles to private by default. When a user gets a link to another person’s profile, they additionally request a temporary access token for that profile. This way, each user can be given granular control over the visibility of their own profile (e.g. only grant up to n tokens within 24 hours, only make my profile visible to n people of this gender within 24 hours, unlink from all cells, revoke all tokens, view granted tokens, etc.)

I’ve been considering how to mitigate the possibility of nodes spamming queries, as well as anchoring and querying areas that they are not located in. I was thinking that each node can maintain a public ledger of their anchor and query history. The anchors or queries themselves wouldn’t need to be revealed, only a header with a timestamp. Before Alice’s node grants an access token to Bob, they first perform lazy validation on Bob’s ledger. I’ve imagined the possibility of using AI to handle this. MindSpore Wasm strikes me as particularly interesting, as its distributed deep learning model seems to fit Holochain’s paradigm surprisingly well. I see it as potentially useful for a few other things, such as client-side moderation. This is definitely its own module, however

Why this approach?

-

Code reuse: instead of writing a ton of new code to handle geolocation, we can rely on existing libraries that are well-supported. The module can be run with Holochain-wasmer, for example. Maybe even OpenSSL can be replaced, perhaps by Rustls or linking in Holochain’s own TLS lib (?) I do intend to publish s2-wasm to the WebAssembly Package Manager for wider access.

-

Versatility: a bare bones, generalized approach enables maximum relevance for other Holochain devs. S2 is a very comprehensive lib by itself, enabling all sorts of geometric operations on the sphere.

-

Maintain Holochain’s unenclosability: needless to say, privacy of geolocation data is a huge area of concern. This method keeps things private, as the coordinates are handled only on the device at the edge. Holo hosts would only be able to track things pseudonymously. Additionally, this method could work over different transport protocols during a natural disaster or other network interruption.

-

Further, this aligns with Holochain’s virtue of consent-driven technology. Giving each person granular control of access tokens guarantees maximum agency for everyone.

-

Performance: C++/Rust compiled to Wasm is fast.

-

S2Geometry and OpenSSL both use the Apache 2.0 license, which, to my knowledge, would permit s2-wasm to be given the Cryptographic Autonomy License.

Right now, I’m troubleshooting the build script for OpenSSL on WAPM. I tweaked the script to pull and unzip the latest version, but am having issues building it, and figuring out how to properly link to S2. I’d love to get any feedback and see if others are interested!

Let me know when you are free to have a call.

Let me know when you are free to have a call.

This is something I have been thinking a lot about for my project Our World because the smartphone version phase 1 is a crowd sourced decentralized distributed replacement for Google Maps, Google Earth etc…

This is something I have been thinking a lot about for my project Our World because the smartphone version phase 1 is a crowd sourced decentralized distributed replacement for Google Maps, Google Earth etc…